Every fintech executive knows the headache: identifying risk fast enough to prevent losses without strangling growth. Yet, most firms still rely on legacy systems that can’t keep up with today’s real-time transaction volumes. The stakes couldn’t be higher — the total transaction value of digital payments is expected to reach USD 36.9 trillion by 2030, meaning risk decisions now happen in milliseconds, not hours. Mistimed calls can trigger fraud, regulatory fines, or customer churn.

Industry leaders are responding differently. From Singapore to London, fintechs are hiring AI agent developers to build intelligent systems that learn, adapt, and make smarter risk judgments. According to McKinsey, firms embedding AI in finance functions are outperforming peers by double-digit margins.

This article explores why — unpacking traditional risk limits, how AI agents in fintech change the game, the benefits, and what to watch for. You’ll also see how a custom AI Agent Development company like Debut Infotech helps fintechs future-proof their risk analysis by developing custom AI agents.

The Current State of Traditional Methods for Risk Analysis

Quick question: how do traditional fintech companies identify, assess, and mitigate potential threats to their operations?

If you’ve been in the sector for long, you’re probably thinking of tried-and-true methods like credit scoring, the use of rule-based filters, and statistical forecasting, and you’re correct. These traditional methods have long been the bedrock of traditional financial risk assessment processes.

For example, fintech companies use indicators such as income statements, repayment histories, and bureau scores to evaluate a borrower’s creditworthiness. Likewise, they create and use fraud detection systems that flag transactions based on predefined thresholds.

In fact, these methodologies were so effective that once deployed, they remained untouched for months or even years.

But here’s the problem with that: leaving them untouched for months limits the ability to respond to threats. In fact, it is worth noting that these traditional risk analysis methods were developed for an era when transaction volumes were lower, data sources were limited, and fraud tactics evolved slowly.

Therefore, while these techniques worked then, it appears the fintech industry is gradually outgrowing them.

According to J.P. Morgan, rapid digitisation worldwide is transforming all aspects of our lives. The company’s proprietary Power Theme outlines five mega themes shaping the future of payments and notes that these five themes account for about $54 trillion in global payment flows.

Read that again: $54 trillion!

Traditional risk analysis methods simply can’t keep up.

The problem is latency and rigidity. Batch data processing means insights are delivered hours—or days—after the event. More so, risk teams must sift through thousands of false positives because rule engines lack contextual understanding.

Fintech firms operate at digital speed. When decisions must happen in milliseconds, traditional methods fail to keep pace. Static models can’t interpret behavioural nuances, and manual reviews can’t scale with transaction growth. The result is a system that’s compliant and auditable—but not agile. This is where AI agents in fintech start becoming a more adaptive, self-learning alternative.

AI Agents in Fintech Risk Analysis: What They Are & Where They Fit

What do we mean by “agents” in a risk context?

Think of software that can perceive incoming data, reason with models and policies, and act inside a workflow. An agent ingests streams from core banking, payments, KYC vendors, devices, and open-source signals. It scores risk, triggers step-up checks, opens a case, or recommends a decision to an analyst.

Crucially, it learns from feedback, so the next decision is better than the last. The European Central Bank notes that this class of systems can strengthen fraud detection, monitoring, and other risk functions when governed well.

So, why is AI in finance useful now?

Real-time payments and digital channels have changed the speed of risk. In 2023, there were 266.2 billion real-time transactions globally, up 42.2% year on year, which compresses the time window for fraud and credit decisions to seconds. If your tooling can’t keep up, losses and customer friction rise.

So, where does AI agent development fit in day-to-day operations?

- Fraud and AML. Agents combine anomaly detection with rules and context. They pull device fingerprints, behavioural patterns, and counterparty graphs, then decide whether to approve, step up, or block.

- Credit risk. Beyond static scorecards, agents enrich thin-file applicants with alternative data, monitor repayment signals, and escalate early-warning cases to collections. The ECB highlights improvements in predictive accuracy across risk use cases, with the caveat that explainability and controls must be maintained.

- Operational and cyber risk. Agents watch process telemetry and vendor feeds, correlate alerts, and route incidents.

- Regulatory reporting and compliance. Agents assemble audit trails, document the rationale behind decisions, and surface model evidence to risk and compliance teams.

One more reality check: criminals also adapt.

According to the UK Financial Times, over 2 million fraud incidents occurred in the UK in the first half of 2025, resulting in losses of over £629 million, most of which involved AI-enabled strategies.

See why fintech companies also need to hire AI agents developers?

In short, agents don’t just automate old rules. They orchestrate decisions across data sources, keep humans in the loop where judgment is needed, and improve with every cycle. That is the foundation for the benefits we’ll quantify next.

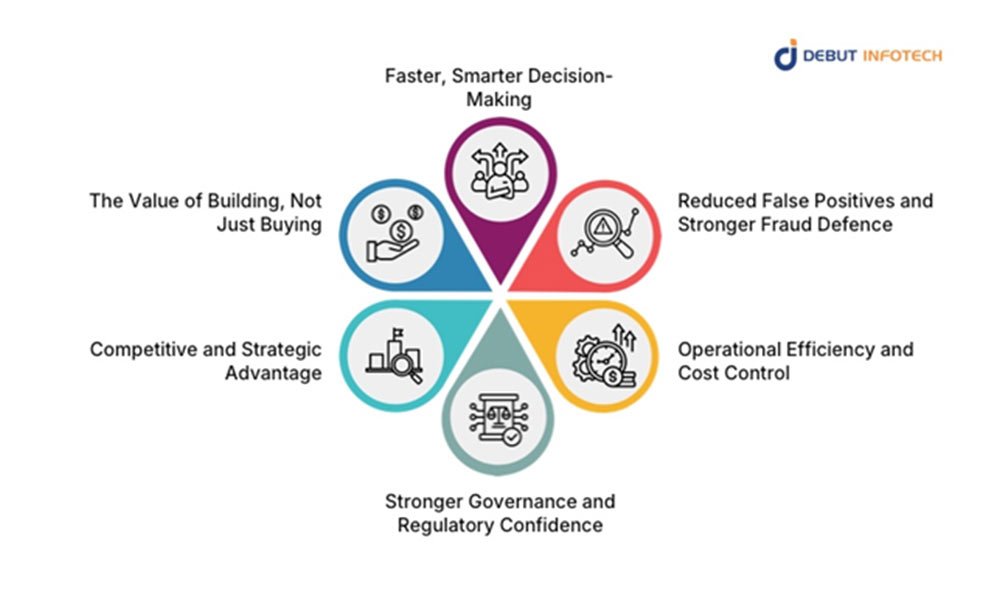

What Fintech Firms Gain From Hiring AI Agent Developers for Risk Analysis: Key Benefits

Short answer? Hiring skilled AI agent developers allows companies to design bespoke risk architectures that evolve as fast as their transaction patterns.

Long answer? The following are tangible benefits businesses like yours can gain from hiring AI agent developers for risk analysis.

1. Faster, Smarter Decision-Making

Speed is now a risk variable. We mentioned earlier that J.P. Morgan estimates its five power themes to account for a whopping $54 trillion in global payments. When dealing with such enormous volumes, milliseconds matter.

That’s exactly what AI agents offer. They process data continuously, not in batches, enabling instant credit scoring or fraud detection.

2. Reduced False Positives and Stronger Fraud Defence

Rule-based systems often swamp risk teams with false alarms during risk assessment. AI agents learn from confirmed cases and dynamically adjust their thresholds. For instance, Mastercard’s new generative AI-based predictive technology has been reported to protect future transactions by reducing false positives in fraudulent transaction detection involving potentially compromised cards by up to 200%. That improvement frees analysts to focus on the few alerts that truly matter.

3. Operational Efficiency and Cost Control

Automation done right reduces both losses and overhead. Deloitte’s Global Risk Management Survey indicates that 30% of companies surveyed are already employing these emerging technologies in their risk management investments. When risk analysis becomes proactive instead of reactive, fewer resources are spent on manual review, and customer friction drops sharply.

4. Stronger Governance and Regulatory Confidence

Regulators now demand explainability, not just accuracy. AI agent developers can embed transparency directly into system design — through decision logs, version control, and model-explanation frameworks. The European Banking Authority has emphasised that explainable AI in credit decisions improves regulatory trust and customer fairness (EBA). As such, fintech companies are hiring AI Agent developers to build their own AI agents, ensuring full visibility into how models reach conclusions. This is a far safer approach than black-box vendor tools.

5. Competitive and Strategic Advantage

Speed and precision in risk analysis translate into better pricing, faster onboarding, and higher customer trust. Fintechs that deploy AI agents can approve more good customers while filtering out risk in real time. As a result, firms that use AI in core risk processes are expected to achieve better results and higher profitability.

6. The Value of Building, Not Just Buying

Finally, there’s the control factor. Off-the-shelf tools are built for the average use case. Fintech firms hire AI agents developers to tailor every layer — data ingestion, model training, explainability, and compliance. With in-house or dedicated development partners, they can pivot models instantly as regulations or market conditions shift. That autonomy is increasingly a competitive moat.

In short, hiring AI agent developers isn’t a cost-saving gimmick; it’s a risk-management strategy. These developers bring together data science, systems engineering, and financial domain knowledge to create agents that act with the speed of automation and the judgment of human oversight. For fintech leaders, that combination is no longer optional; it is key to efficient risk assessment processes.

Conclusion

Risk management is no longer a compliance exercise — it’s a growth strategy. Traditional tools can’t keep up with real-time payments, evolving fraud, and rising regulatory expectations. AI agents in fintech deliver what spreadsheets and static models never could: continuous learning, faster insight, and sharper decisions.

Throughout this piece, we’ve seen how intelligent agents redefine risk assessment, enhance fraud detection, and improve operational efficiency. That’s why forward-thinking fintechs now hire AI agents developers rather than buy generic tools. They want bespoke systems that understand their data, adapt to their markets, and align with governance demands. At Debut Infotech, you can hire AI Agent developer to build these AI-agent ecosystems from the ground up so that you can transform risk analysis into a strategic advantage in the $36-trillion digital economy.